Various AI implementations do this - e.g. the Atom AI logo generator occasionally leaves out a letter, and Chat GPT sometimes simply makes up believable, but nonexistent, references if you insist it give scholarly references.

This means

we all should be careful of AI, much more so than I think the majority of people and even business leaders are, but as Hinton and others stress AI is meant to emulate how people think, and people have those same imperfections, and sometimes exaggerate or even make things up. It is important to think of AI in this way.

Confabulation is apparently the preferred technical term for these.

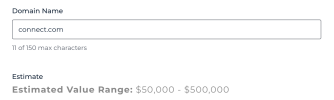

Sure, I think the About on the site makes it clear that this, like virtually every application of AI, is depending on one or more of the big models, I assume. And yes, you could undoubtedly get a similar thing directly by writing the right prompts. I view this as an ease of use question, frankly like almost every other AI agent right now.

I would never use only this, or any other, valuator. It is one way of looking at a name. There is definitely value in looking at comparator sales, and probably value in considering algorithmic valuators that concentrate on various aspects of a name.

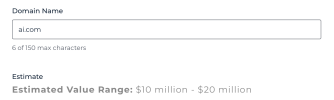

A lot of people are concerned about the

variability in the estimates. I agree with

@SuperBrander if there is much variability, it

will make this not very useful as a way to support the value of a name to a potential buyer. On the other hand, if you took the 20 best art experts, and had them look at a novel new work, I am pretty sure you would get 20 different ranges of price. And also pretty sure, if you asked the same expert to look again six months later, would not give exactly the same range.

Each domain name is a unique digital asset. If one has AI, rather than algorithmic, approaches, it is to be expected the range will be different in different queries, because each one is like asking a new expert, to some degree.

@Michael concern about pricing being based on vague generalities that the AI 'read' in its training is indeed a concern. I don't know enough about the training to comment more.

For me,

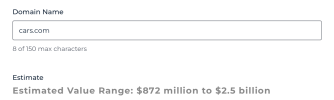

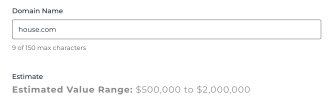

this was the test. I tried it with a bunch of names mainly that I had for sale, and a few that I had sold, with a price in mind for each. Most of the time the price I had in mind fell somewhere in the range suggested by the tool. Also, I checked it with a few names that I honestly feel are special, but that other valuators treated as mundane, kind of are these names better than most of the very mundane names in my portfolio. A number of times this tool indeed placed them higher, which increased my view of it.

I have not yet done a truly systematic look. For example have not really looked at what it says for elite level names, and only a handful of the type of names that BrandBucket and Atom handle. But for a recent release I am impressed. Still all the usual caveats about any automated valuator are relevant.

Most of the domain experts are, generally rightly, highly critical of domain valuation in any automated way. It would be interesting to have a panel of 10 experts agree to do a truly blind valuation of a set of names. Both to see variability among the experts, and how frequently the expert price was somewhere in the range suggested by this.

I have for years bristled every time I see an appraisal tell me the value of a name is $1473 (as though anyone knows it precisely). It is refreshing to at least see an instrument that suggests $1000 to $4000 is the range.

Bob